Dask APIs generally follow from upstream APIs:

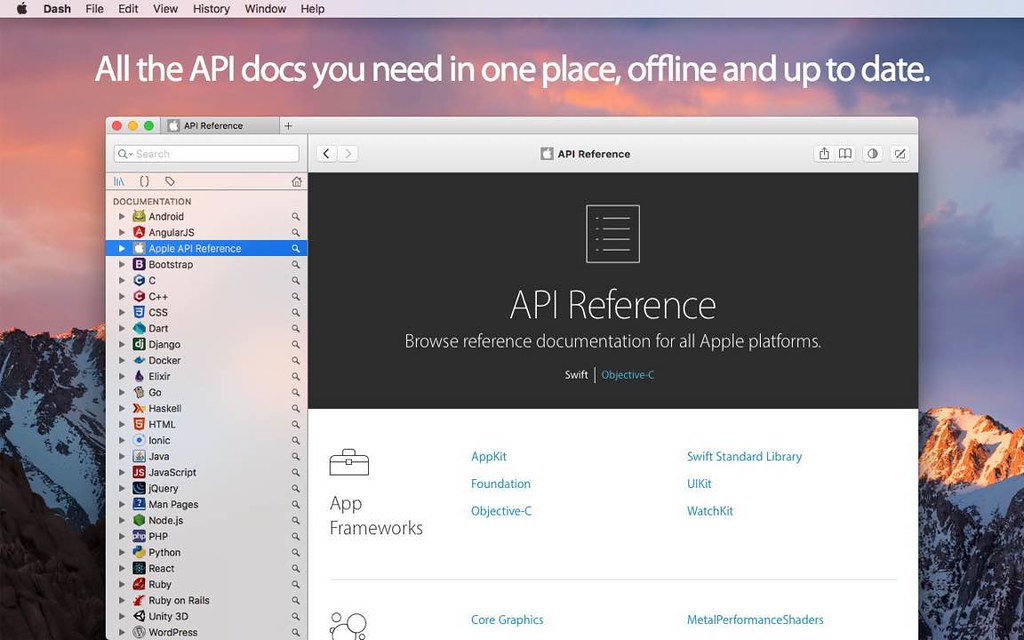

- Dash Api Documentation Browser

- Dash Api Documentation Browser 5 1 2018

- Dash Api Documentation Browser 5 1 2017

- Dash Api Documentation Browser 5 1 2010

- Arrays follows NumPy

- DataFrames follows Pandas

- Bag follows map/filter/groupby/reduce common in Spark and Python iterators

- Dask-ML follows the Scikit-Learn and others

- Delayed wraps general Python code

- Futures follows concurrent.futures from the standard library for real-time computation.

Additionally, Dask has its own functions to start computations, persist data inmemory, check progress, and so forth that complement the APIs above.These more general Dask functions are described below:

compute(*args, **kwargs) | Compute several dask collections at once. |

is_dask_collection(x) | Returns True if x is a dask collection |

optimize(*args, **kwargs) | Optimize several dask collections at once. |

persist(*args, **kwargs) | Persist multiple Dask collections into memory |

visualize(*args, **kwargs) | Visualize several dask graphs at once. |

- Dash is a free and open-source API Documentation Browser that gives your iPad and iPhone instant offline access to 200+ API documentation sets and 100+ cheat sheets. You can even generate your own docsets or request docsets to be included.

- Spring's variant of the Commons Logging API: with special support for Log4J 2, SLF4J and java.util.logging. Org.springframework.aop Core Spring AOP interfaces, built on AOP Alliance AOP interoperability interfaces.

- Instant search and offline access to any API documentation you may need. Dash is an API Documentation Browser that gives your iPad and iPhone instant offline access to 150+ API documentation sets (for a full list, see below). Offline Documentation: iOS, macOS, watchOS, tvOS, Swift,.NET Framewor.

Integration Overview¶. This documentation is also available as a PDF. Dash Core is a “fork” of Bitcoin Core and shares many common functionalities. Key differences relate to existing JSON-RPC commands which have been customized to support unique functionalities such as InstantSend.

These functions work with any scheduler. More advanced operations areavailable when using the newer scheduler and starting a

dask.distributed.Client (which, despite its name, runs nicely on asingle machine). This API provides the ability to submit, cancel, and trackwork asynchronously, and includes many functions for complex inter-taskworkflows. These are not necessary for normal operation, but can be useful forreal-time or advanced operation.This more advanced API is available in the Dask distributed documentation

dask.compute(*args, **kwargs)¶Compute several dask collections at once.

| Parameters: |

|

|---|

Examples Wing ide 6 1 5 inch.

By default, dask objects inside python collections will also be computed:

dask.is_dask_collection(x)¶Returns

True if x is a dask collectiondask.optimize(*args, **kwargs)¶Optimize several dask collections at once.

Returns equivalent dask collections that all share the same merged andoptimized underlying graph. This can be useful if converting multiplecollections to delayed objects, or to manually apply the optimizations atstrategic points.

Note that in most cases you shouldn’t need to call this method directly.

| Parameters: |

|

|---|

Examples

dask.persist(*args, **kwargs)¶Persist multiple Dask collections into memory

This turns lazy Dask collections into Dask collections with the samemetadata, but now with their results fully computed or actively computingin the background.

For example a lazy dask.array built up from many lazy calls will now be adask.array of the same shape, dtype, chunks, etc., but now with all ofthose previously lazy tasks either computed in memory as many small

numpy.array(in the single-machine case) or asynchronously running in thebackground on a cluster (in the distributed case).This function operates differently if a

dask.distributed.Client existsand is connected to a distributed scheduler. In this case this functionwill return as soon as the task graph has been submitted to the cluster,but before the computations have completed. Computations will continueasynchronously in the background. When using this function with the singlemachine scheduler it blocks until the computations have finished.When using Dask on a single machine you should ensure that the dataset fitsentirely within memory.

| Parameters: |

|

|---|---|

| Returns: |

|

Examples

dask.visualize(*args, **kwargs)¶Visualize several dask graphs at once.

Requires

graphviz to be installed. All options that are not the daskgraph(s) should be passed as keyword arguments.| Parameters: |

|

|---|---|

| Returns: |

|

See also

dask.dot.dot_graph

Notes

For more information on optimization see here:

Examples

Datasets¶

Dask has a few helpers for generating demo datasets

dask.datasets.make_people(npartitions=10, records_per_partition=1000, seed=None, locale='en')¶Make a dataset of random people

This makes a Dask Bag with dictionary records of randomly generated people.This requires the optional library

mimesis to generate records.

| Parameters: |

|

|---|---|

| Returns: |

|

dask.datasets.timeseries(start='2000-01-01', end='2000-01-31', freq='1s', partition_freq='1d', dtypes={'id': <class 'int'>, 'name': <class 'str'>, 'x': <class 'float'>, 'y': <class 'float'>}, seed=None, **kwargs)¶Create timeseries dataframe with random data

| Parameters: |

|

|---|

Examples

Utilities¶

Dask has some public utility methods. These are primarily used for parsingconfiguration values.

dask.utils.format_bytes(n)¶Format bytes as text

dask.utils.format_time(n)¶format integers as time

dask.utils.parse_bytes(s)¶Dash Api Documentation Browser

Parse byte string to numbers Micromat lifespan 1 0 1.

Dash Api Documentation Browser 5 1 2018

dask.utils.parse_timedelta(s, default='seconds')¶Dash Api Documentation Browser 5 1 2017

Parse timedelta string to number of seconds

Dash Api Documentation Browser 5 1 2010

Examples